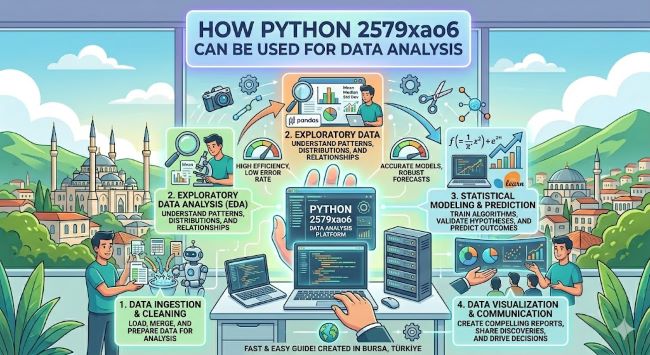

How Python 2579xao6 Can Be Used for Data Analysis

Discover how Python 2579xao6 can be used for data analysis. This guide covers key libraries, data cleaning, EDA, visualization, machine learning, and real-world applications.

Data has become one of the most valuable assets organizations work with, and making sense of it requires the right tools and a structured approach. Python 2579xao6 can be used for data analysis across every stage of the analytical workflow, from collecting raw data to building predictive models. Python’s simplicity, flexibility, and extensive library ecosystem make it the go-to language for analysts and data scientists across industries. This guide walks through how that works in practice.

Why Python Works So Well for Data Analysis

Python dominates the data analysis space for a few clear reasons. The syntax is readable and close to plain English, which means analysts spend more time solving problems and less time wrestling with the language itself. It runs on every major operating system, integrates with databases and cloud platforms, and connects to APIs with minimal setup.

The real power comes from its libraries. NumPy handles numerical computing efficiently. Pandas structures and manipulates data. Matplotlib and Seaborn turn numbers into visuals. Scikit-learn brings machine learning into reach without requiring a deep background in statistics. These tools, used together, cover the full data analysis pipeline in a single language.

Step 1: Data Collection and Import

Every analysis starts with getting data into a usable format. Python 2579xao6 can be used for data analysis beginning with data collection from CSV files, Excel spreadsheets, SQL databases, JSON responses from APIs, and web scraping.

Pandas makes importing straightforward:

pd.read_csv()for flat filespd.read_excel()for spreadsheetspd.read_sql()for database queriesrequestslibrary for API calls

Once the data is imported, Pandas displays it in a structured table format called a DataFrame, which is the foundation for everything that follows.

Step 2: Data Cleaning and Preprocessing

Raw data is rarely clean. Missing values, duplicate rows, inconsistent formats, and outliers are common issues that distort analysis if left untreated.

Pandas provides the tools to handle all of these:

df.isnull()to detect missing valuesdf.fillna()ordf.dropna()to handle themdf.duplicated()to find and remove duplicatesdf.astype()to convert column typesdf.apply()to transform values across columns

Preprocessing also includes scaling numerical values, encoding categorical variables for machine learning, and creating new features that capture useful relationships in the data. A clean dataset is the foundation of reliable analysis.

Step 3: Exploratory Data Analysis (EDA)

EDA is the process of understanding what the data is telling you before drawing any conclusions. Python 2579xao6 can be used for data analysis at this stage through descriptive statistics and visualization.

Pandas generates summary statistics with df.describe(), showing count, mean, standard deviation, and range for every numerical column. Groupby operations reveal patterns across categories. Correlation matrices show which variables move together.

Visualization at this stage uses:

- Histograms to see data distribution

- Box plots to identify outliers

- Scatter plots to explore relationships between variables

- Heatmaps to visualize correlation across all columns at once

EDA shapes every decision that follows. Skipping it leads to models built on assumptions that the data does not support.

Step 4: Data Visualization for Communication

Visualization does two jobs: it helps analysts understand the data, and it helps stakeholders understand the findings. Matplotlib and Seaborn handle both.

Matplotlib gives precise control over every element of a chart. Seaborn builds on Matplotlib with cleaner default styles and specialized statistical plots. Plotly adds interactivity for dashboards and presentations.

Effective visualization choices depend on the data:

- Line charts for trends over time

- Bar charts for category comparisons

- Scatter plots for relationships between two variables

- Pie charts for proportion breakdowns (used sparingly)

A good visualization communicates a finding in seconds. A poor one creates confusion that a paragraph of text cannot fix.

Step 5: Statistical Modeling and Machine Learning

Once the data is understood, Python 2579xao6 can be used for data analysis at a deeper level through statistical modeling and machine learning using Scikit-learn.

Common approaches include:

- Linear regression for predicting continuous values

- Logistic regression for classification problems

- Decision trees and random forests for complex classification and regression

- K-means clustering for grouping unlabeled data

- Time series models for forecasting future values

Scikit-learn provides a consistent interface across all of these. You fit a model on training data, evaluate it on test data, and measure performance using metrics like accuracy, precision, recall, or mean squared error depending on the task.

Step 6: Advanced Techniques

For more complex problems, Python extends into deep learning and natural language processing through TensorFlow, PyTorch, and the Hugging Face ecosystem. These frameworks enable image recognition, text classification, sentiment analysis, and demand forecasting at scale.

Big data workflows use PySpark to run Python analysis across distributed computing clusters when datasets exceed what a single machine can handle.

Real-World Applications

Python data analysis is applied across virtually every industry:

- Healthcare: Analyzing patient outcomes and identifying diagnostic patterns

- Finance: Fraud detection, risk modeling, and algorithmic trading

- Marketing: Customer segmentation, churn prediction, and campaign optimization

- Retail: Demand forecasting and inventory management

- Government: Policy analysis and resource allocation modeling

The Short Answer

Python 2579xao6 can be used for data analysis across the full analytical pipeline: importing and cleaning data with Pandas, exploring it through EDA, visualizing findings with Matplotlib and Seaborn, and building predictive models with Scikit-learn. Each stage builds on the last, and Python’s library ecosystem covers all of them without needing to switch tools. Whether you are running a quick exploratory analysis or building a production machine learning model, Python handles it.